How AI Decides What To Cite (And Why Most Brands Get It Wrong)

May 11, 2026

George Assimakopoulos

Tags

- AEO

- AI

- Answer Engines

- brand strategy

- Conversational Data

- Generative AI

- Large Language Models

- LLMs

- social intelligence

Contributing Author:

George Assimakopoulos – Managing Principal @ Metric Centric

A few weeks ago, I asked three different answer engine platforms the same question:

“Who are the top firms in [a specific B2B category]?”

The results were revealing.

Some well-known brands appeared consistently. Others – equally credible, equally capable – were nowhere to be found.

Not buried. Not ranked lower.

Completely absent.

That’s when it hit me: Visibility in AI isn’t about how good your company is. It’s about how well your content aligns with how AI decides what to cite.

We’ve moved from a world of “show me links” to “tell me what’s true” – and that shift changes everything.

Traditional search engines ranked pages. AI selects, synthesizes and cites.

That means your brand is either represented in the answer or invisible in the conversation.

There’s very little middle ground.

For business leaders, this creates a new reality: Great expertise and strong SEO rankings are no longer enough. If your brand isn’t structured to be understood, validated and surfaced by AI, you risk disappearing from the very moments influencing customer decisions.

The companies that adapt early will shape how AI understands their category. The ones that don’t may never enter the conversation at all.

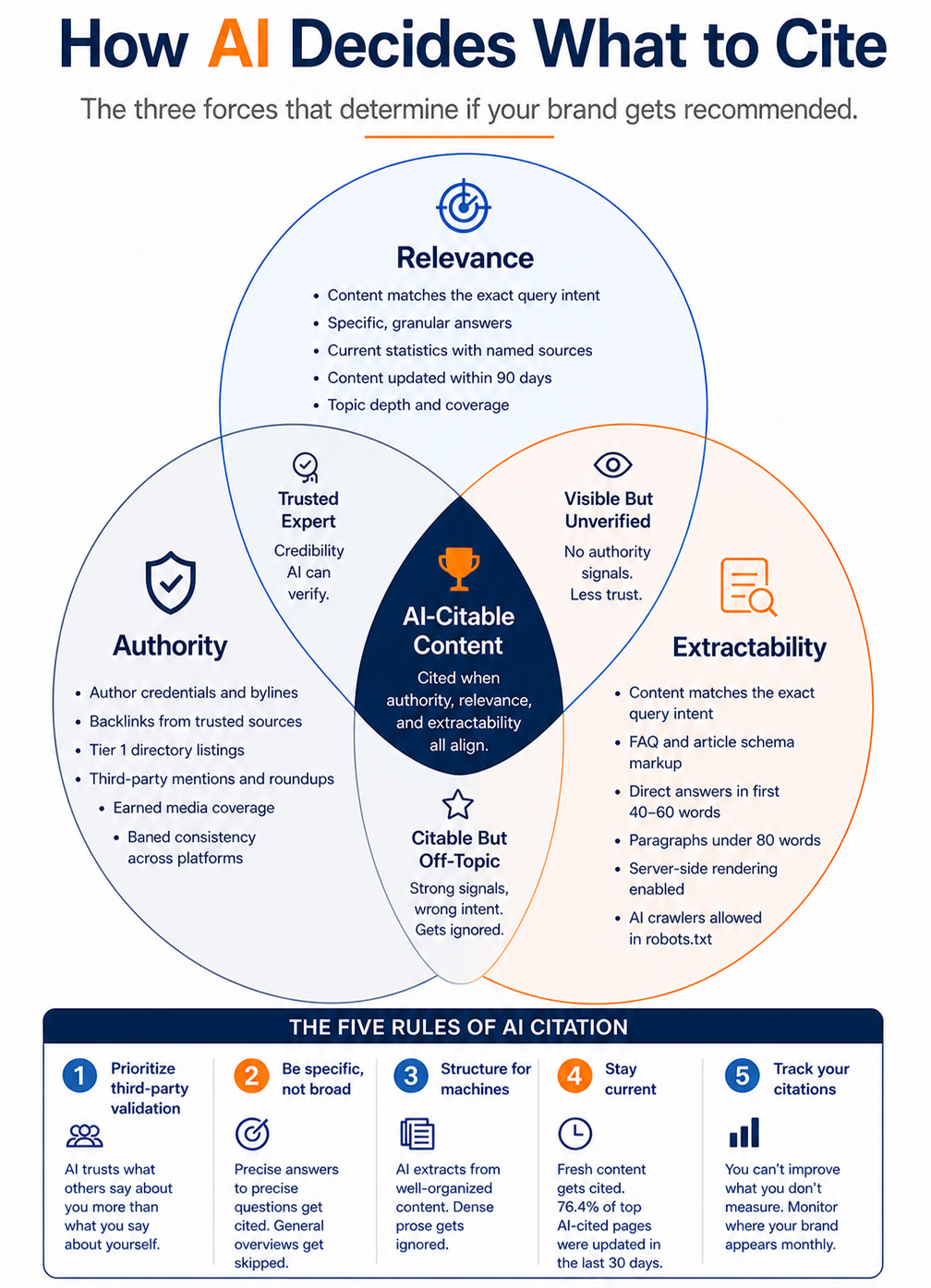

The Three Forces That Drive AI Citations

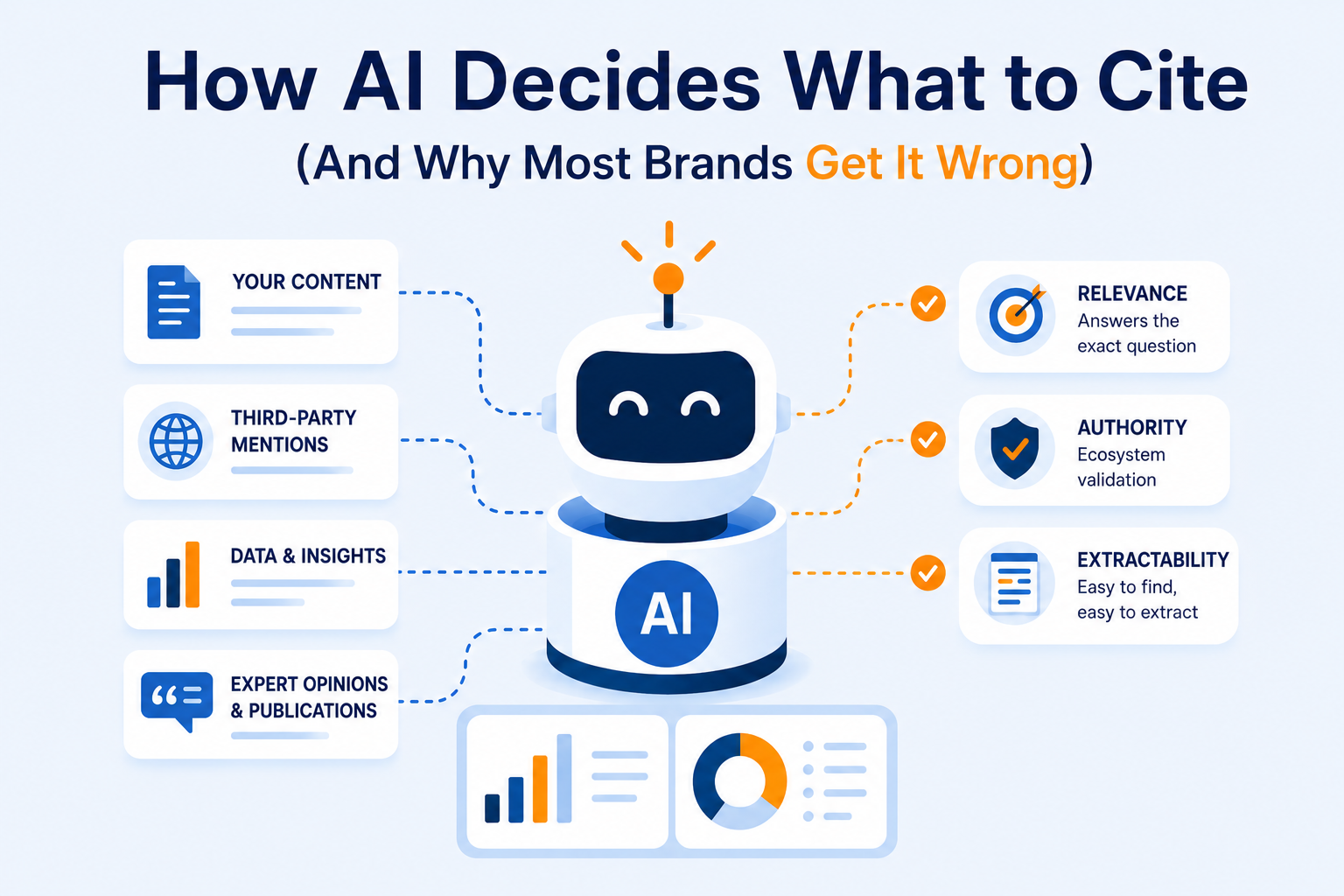

Through our work at Metric Centric analyzing both conversational data and AI-generated answers, we’ve found that three forces consistently determine whether a brand gets cited:

1. Relevance – Do you answer the exact question?

This sounds obvious. It’s not.

I once reviewed a client’s content library: hundreds of pages, beautifully written. But when we tested it against AI queries, almost none of it surfaced.

Why?

Because that content was written for broad audiences – not specific questions.

AI looks for:

- Direct answers

- Clear intent matching

- Specificity over storytelling

Great content does not equal (≠) citable content.

2. Authority – Does the ecosystem validate you?

We worked with a B2B brand that had incredible internal expertise – PhDs, decades of experience, deep insights.

But AI barely cited them.

Meanwhile, a smaller competitor kept showing up.

The difference wasn’t necessarily expertise. It was validation.

The competitor had:

- Third-party mentions

- Directory listings

- Consistent brand presence across platforms

AI doesn’t just trust what you say about yourself. It trusts what others say about you.

3. Extractability – Can AI actually use your content?

This is the most overlooked – and most fixable – factor.

The same B2B brand said: “We have the answer… it’s just buried in paragraph eight.”

That’s the problem.

AI isn’t reading like a human. It’s scanning for:

- Clean structure

- Short, direct answers

- Schema markup

- Crawlable, accessible content

If your content can’t be easily extracted, it won’t be cited – even if the information itself is perfect.

This is where we often see most brands fall short. Their content is:

- Trusted but not extractable = Great expertise, but hard to parse

- Visible but not authoritative = Easy to find, but lacks trust signals

- Credible but off topic = Strong brand, wrong answers

The brands that consistently show up in AI results? They align all three: Authority + Relevance + Extractability.

The Five Rules of AI Citation

If you’re thinking about how to act on this, here’s a simple framework we use with clients:

1. Prioritize third-party validation

AI trusts the ecosystem more than your homepage.

2. Be specific, not broad

Answer real questions directly. Skip the fluff.

3. Structure for machines

If AI can’t extract it, it won’t cite it.

4. Stay current

Freshness matters more than most brands realize.

5. Track your citations

If you’re not measuring AI visibility, you’re guessing.

Sometimes it’s easier to see it than to read it. So, here’s a simple visual we use with clients to make sense of all this:

A Final Thought: AI Remembers. Humans Imagine.

One of the biggest misconceptions about AI is that it “creates” answers from scratch.

It doesn’t.

It’s remembering, synthesizing and selecting from what already exists.

That means if your content isn’t structured to be learned, validated by others and aligned to real questions, it simply won’t exist in the answers that matter.

Most brands are still optimizing for search rankings. Very few are optimizing for answer inclusion.

That gap? That’s the opportunity.

If you’re wondering how AI understands your brand today – and whether you’re being cited, misrepresented, or missed entirely – that’s exactly what we help uncover at Metric Centric. Connect with us today – let’s make sure your expertise is visible to both people and machines.

Related

Blog Posts

Conversational Data: The Missing Feedback Loop Contributing Author: George Assimakopoulos – Managing Principal @ Metric Centric A few weeks ago, I asked a simple question to a room full of smart B2B executives: “How do you know what people are...

Contributing Author: Michael B. Snead – Business Advisor @ Metric Centric You’re planning a quick weekend getaway to Austin with friends from college, and you’re tasked with making the itinerary – the hotel, dinner reservations, the bars that w...

What LLMs Amplify vs. What They Erase Contributing Author: Michael B. Snead – Business Advisor @ Metric Centric At first, it feels like AI makes everything more visible. You ask a question, and suddenly the answer is right there – summari...